What Does "Every Page Is Page One" and "Include It All, Filter It Afterward" Mean?

One of the more memorable presentations I attended at Lavacon in Portland was Mark Baker's "Include It All, Filter It Afterward" presentation. You can view the slides from his presentation here. I also embedded them from Slideshare below.

"Every Page Is Page One"

Mark used David Weinberger's Too Big to Know book as a foundation for some of his arguments. Mark explained that before the Internet, knowledge had a much higher cost to access. By cost to access, I mean you had to travel to the library and search out books from card catalogs, or something similarly painful. When you finally found the book, usually after a great deal of effort, you treasured its contents because it required so much to acquire.

Additionally, the book was laborious to produce for the writer and publisher, involving multiple drafts, peer reviews, editorial reviews, specialized layouts and formats for printing, distributing, etc. It took a lot of effort to get that book to you.

As a result, you tended to read the book more thoroughly. You wouldn't just read a page and toss it aside, because you can't just go get another book without expending a lot of time, money, and effort to get a new one. Knowledge from books was precious and expensive.

With the Internet, however, the cost to access knowledge is almost nothing — the click of a mouse. You search for a term, and if you don't like the result, there are dozens more to click and read, all within a few seconds. It's easy to throw away an ineffective result. You may skim a few paragraphs and, if the information isn't readily visible and consumable, you can click back and try another. Clicking different links in Google's search results doesn't cost you anything, so why should you spend time on an uninformative site? There's no investment, so you can discard it as fast as you find it.

The ease of publication also lowers the value of knowledge. With two million new blog posts published a day, why should anyone have much investment in a single post? The whole act of writing, publishing, and distributing probably took less than an hour. As such, the value of that knowledge decreases. Discarding one blog post in favor of another is common and practical. When I move through my RSS feed, I give titles an average of three seconds before moving on.

Mark says that because of the low cost to access knowledge, it's much more likely that someone reads 1 page from 10 sources than 10 pages from 1 source. The web has reduced the cost of knowledge so much that users tend to jump from source to source, reading only a page or so before moving on to somewhere else.

Because users may only view one page, you have to present enough information on that page so that it makes sense to the reader, so that its meaning can be complete and whole enough to deliver value to the user no matter where the topic is in your help system. For the user, every page is the beginning and end, or "every page is page one."

Mark lists several characteristics of an Every Page Is Page One topic. They are self-contained, establish their own context, conform to a common type, and link richly.

"Include It All. Filter It Afterward."

The argument about "Every Page Is Page One" transitions to another, related argument: "Include It All. Filter It Afterward."

The days when you carefully organized content into groups that made sense, when you arranged content in books in a table of contents in the online help to be processed in a fixed order — all those days are over, because the user is no longer encountering and interacting with a book. The paradigm has changed. The user either interacts with a single page from your site, or more ideally, the user interacts with information as he or she has chosen to organize it, such as a grouping of all articles that have a specific hashtag.

Mark explains:

It is no longer the writer's job to filter and organize content for the reader. In the book world, the physics and economics of paper meant that the writer had to act as a filter, carefully selecting a small and highly organized set of information to provide to the reader. But on the web, the power to filter and organize information passes into the hands of the reader. Rather than seeking out content silos and then searching within them, readers prefer to Google the entire Web and then select and filter the results they receive. In the words of David Weinberger, their preferred strategy is, "include it all, filter afterward".

Despite this, writers tend to approach the web as simply another publishing medium, where they will make filtered and ordered content available to readers in a form that assumes the reader is looking at their content in isolation. The reality is that most readers are encountering their content as just one item in a set of search results -- they are including everything and filtering afterwards. To better serve readers who seek information this way, writers need to change from creating content that is filtered and ordered to creating content that is easy for readers to filter and order for themselves.

Rather than spending time organizing and arranging content for the user, Mark says it's better to provide filtering mechanisms — even if it's just search results — for users to organize the content themselves.

Beyond search engines, the Internet is rich with sites that provide alternative organization tools. For example, on Twitter, users add hashtags to the tweets to sort and organize the content. I add #techcomm to my tweets, and look for other tweets with the same #techcomm hashtag. Twitter didn't come up with this hashtag. The users did. Twitter just provided the tool.

When you search on Google, the search results appear in an unordered list, but Google provides tools for you to filter the results. You can filter by images, maps, shopping, books, videos, news, places, blogs, flights, discussions, recipes, and patents. In other words, Google gives you tools to organize the mass of content for yourself, not only sorting by keyword but also by type.

With Digg and Reddit, rather than organizing the content for readers, the site instead provides voting tools that allow users to vote articles up or down. The most popular articles surface to the top, which increases their visibility.

Pinterest also doesn't provide an explicit organization of content for users. Instead, Pinterest provides a pinboard that allows users to pin items on boards with specific topics. You can then view the most popular pins, or the most popular pins based on others in your social network. This allows you to view content based on what your friends find interesting.

LinkedIn is another example of a site that provides a tool to allow users to organize the content themselves, rather than organizing it for the users. Mark says, "LinkedIn contains massive amounts of information from thousands of users, and gives you the tools to filter what you see." You can see updates from other people that you link to, and you can also join groups and participate in discussions of topics that you select. As a user, you control the kind of information you see on the site.

Weinberger's ideas for filtering are not so different from the ideas his previous book, Everything Is Miscellaneous. In that book, Weinberger argued that we should attach metadata to everything, and then let the user select the metadata he or she wants in order to view all objects with the same metadata.

In Weinberger's model, you write as much relevant information as you can, add appropriate metadata to the content, and then let the user filter and organize it through the tools you provide, whether those tools are search, tags, faceted filters, dynamic aggregators, voting mechanisms, pinboards, friend updates, or other tools.

Forgetting about a predefined organization is kind of an exciting, liberating proposition. All that effort in grouping and sorting your topics — it doesn't matter in the end. What you need to focus on instead is the information, and provide a way for users to filter it themselves.

Relating "Every Page Is Page One" to "Include It All. Filter It Afterward"

Exactly how does the idea of "Every Page Is Page One" connect to the other idea of "Include It All. Filter It Afterward"? In both cases, we've moved away from the book paradigm to the single page paradigm. In the first case, users are viewing one page on ten different sites due to the low cost of information access. Viewed in isolation, the pages aren't browsed in a larger table of contents. To the user, each page is the first and only page viewed.

In the second example, "Include It All," the individual pages of information are also disconnected from a predefined grouping. The users (not necessarily the content creators) define the way the content is grouped, sorted, and organized. In this case, every page is page one as well because a predefined sequential ordering has been replaced by a dynamic, user-driven method for filtering content. Every page has to be retrievable as an independent object so that it can be reordered, sorted, and organized in various user-defined orders. Again, every page is page one to the user.

Analyzing Assumptions

Overall, I really enjoyed this argument. I find it deep and insightful. There's a lot of truth to it, especially for Internet behavior. However, I think some of the assumptions, when viewed in context of help material, push the analogy a little too far. I'll examine several assumptions and consider a few counterarguments.

Assumption #1: Bounce Rates and Next Page Flow

(12/8/2012 Note: Based on the comments on this post, I updated this section on bounce rates to be more accurate with the Every Page Is Page One argument.)

I originally thought the Every Page Is Page One style meant that a user jumped from site A to site B to site C, but Mark later explained that it's not just jumping among sites, it's jumping around within a single site as well. For example, users tend to jump non-linearly from one site section to another in the same way a user might jump around in a book, moving from page 3 to page 62 to page 15 to page 99, and so forth.

Essentially, any non-sequential, non-linear movement through your help content creates an Every Page Is Page One experience, because with each new page, the reading experience resets. The reader doesn't bring over the knowledge and context from the previous page.

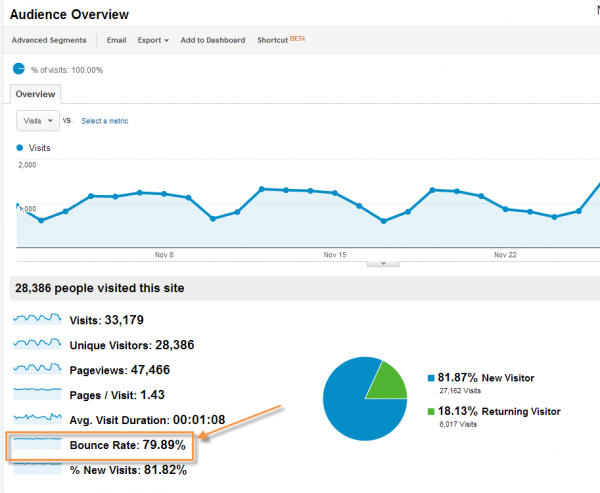

Whether jumping from one domain to another, or jumping around within the same domain, I think bounce rates might be a relevant web analytic to analyze. A bounce means a visitor viewed just one page on your site before leaving. On my blog, 79.89% of the time, readers check out just one page before bouncing to some other site.

This means that for 80% of my readers, the idea of "Every Page Is Page One" holds true. The other 20% dig deeper, sometimes visiting several pages of my site on their visit. Since I don't have a linear sequence to my blog posts, the movement from one blog post to another aligns with the Every Page Is Page One experience as well.

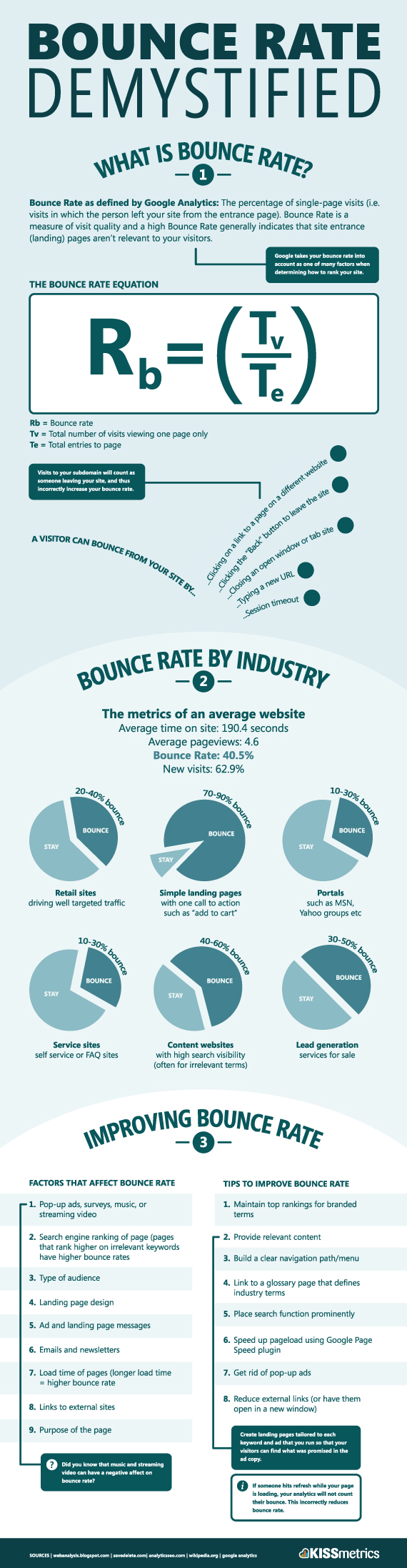

Non-blog websites often have a lower bounce rate. Kissmetrics has an infographic showing bounce rates for different types of websites.

As you can see, the bounce rates vary by the type of site. A service site (self-service or FAQ site) supposedly has only a 10-30 percent bounce rate.

Blast, a marketing and analytics site, provides some additional bounce rates for different genres of content:

- 40-60% Content websites

- 30-50% Lead generation sites

- 70-98% Blogs

- 20-40% Retail sites

- 10-30% Service sites

- 70-90% Landing pages

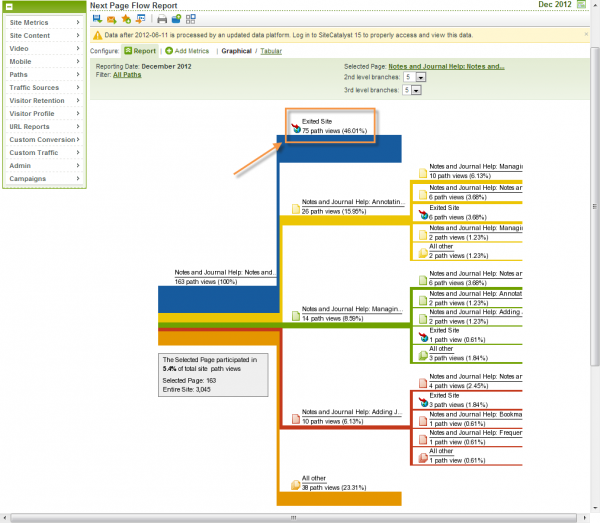

But what about a help system? I use Omniture rather than Google to track hits on some of my help material. Omniture doesn't have a bounce rate percentage, but it does have a related feature called "Next Page Flow." Here's the Next Page Flow report for one of my help products. The bounce rate (or percentage of people who exited the site after viewing the first page) was 46%.

The graph also shows the next path users took from the landing page, which can help in determining the content most important to users.

I don't have a linear sequence that users are supposed to follow through the help material. Because I write in an Every Page Is Page One style by default, my topics tend to be large, self-contained units of information. I arrange about a dozen options in the table of contents, and there really isn't an established order or sequence to move through them (for example, see my Calendar help), so just looking at the next page flow doesn't tell me much. But it does point out that the path away from the homepage varies quite a bit. 16% of users clicked the yellow path, 9% clicked the green path, 6% clicked the red path, 23% clicked the orange path ("Other"), and 46% exited the site. There is no clearly defined path that users take through help content.

If I had bursted a Framemaker book into a sequential reading experience online, then I could analyze the Next Page Flow to see if readers really proceeded to the next page they were supposed to. But since I don't follow this practice (what Mark calls "Frankenbooks"), I can't analyze the behavior.

I bring up bounce rates to point out how frequently users bounce around online, especially from one site to another. The bounce rates for my help products average 45 to 65 percent. Clearly, readers do not read sequentially online. Not only do they move from site to site, they also move in a variety of paths within a site.

The Next Page Flow can be helpful, however, in determining the related topics to present in a specific help topic. For example, in the Next Page Flow above, if a lot of users clicked to topic R after viewing topic J, then I might put some links on topic J pointing to topic R and vice versa. Such a situation might occur if topic R and J have some close relationships or confusingly similar terminology.

Assumption #2: Users Want Tools to Order Help Content Their Own Way

Many of the sites mentioned as examples that allow users to organize content their own way — Twitter, Digg/Reddit, LinkedIn, Pinterest, and so on — don't focus on help material. Their content is more entertaining, social, and fun. As such, users are more inclined to engage by tagging, sharing, pinning, and posting content.

In contrast, help material is less pleasant. About the only help site I know of that incorporates a social tool (other than search) is Stack Overflow. On Stack Overflow, some users ask questions and others provide answers. You can vote the most useful answer to the top. This method works well, but by and large people viewing help are in an entirely different state of mind than those using social tools. In help systems, users are angry, frustrated, impatient, struggling to understand, etc. Do they really want to pin some of their favorite instructions on a pinboard designed for your product?

I remember seeing a feature in Flare's webhelp skin that I thought was amusing. You could bookmark your favorite help topics so that if you wanted to quickly access them, you could easily view all the topics you had bookmarked (at least until you cleared your browser's cache). I think I used that feature maybe once or twice out of novelty, even though I've used Flare's help many times.

As long as help remains instructional material, it probably will never succeed in the social/interactive/gaming/playing space. But that doesn't mean we can't invent new tools and find user-driven organization systems that work for help scenarios.

Voting on a help topic might be a good idea. And aggregating the most popular topics would also be helpful. Showing the most recent comments can increase engagement, especially if you respond to the users' questions. Providing faceted filters in search results can also be powerful. For search, it might be more effective to integrate a Google Custom Search. We are still so young in exploring these tools, but the options are there and will continue to expand as the web matures.

In the absence of any others tools to assist with organization, some form of guided organization is probably better than none at all.

Assumption #3: This Philosophy Doesn't Address the Learning Situation

When we talk about users visiting 1 page on 10 different sites instead of 10 pages on 1 site, we're probably not talking about someone who wants to learn a tool. If someone is in find mode, searching for a specific answer in a sea of information, it makes sense for the user to constantly search from site to site rather quickly for the information.

For example, my wife was recently trying to turn on iTunes' Home Sharing for her new computer so she could hear her playlist from her iPhone. She said she looked briefly in iTunes' help (which, she noted, wasn't helpful) and then searched on Google but didn't find anything. Then she gave up.

Contrast this behavior with someone who says, "I would like to learn Adobe Illustrator better." This latter person, with a goal of learning, may want to progress through a series of tutorials or sequential instructions that start simple and become more advanced.

One of the most popular elearning sites — Lynda.com — does exactly this. The online instructors have about 20-30 tutorials for a specific software application, and many of the ideas build on each other in the course. You can skip around as you learn a new software tool, but you don't usually hop from Lynda.com to Youtube.com to Vimeo.com looking for ways to learn Illustrator. If you want to learn, you become accustomed to a particular site and watch or view multiple topics from a course list or table of contents.

In learning scenarios, every page is not page one unless the tutorial is particularly bad. That said, the e-learning tutorials should allow users to skip around, moving to topics that interest them rather than being forced into a specific order. The way Lynda.com tutorials are broken up into small segments (5 minute videos) does allow users to jump around in the order they want.

Assumption #4: Not All Help Content Is Ubiquitous and Online

When help information yields a lot of search results on the web, the access cost of the help is undeniably low. For example, if you have a question involving WordPress, google it and you'll probably find a similar answer on 20 different sites (including mine). Click one and if you don't find the answer in 20 seconds, try another, and another. There's no loyalty to any particular site or author.

However, if you have a question about a more specialized application that doesn't have a lot of competing websites with similar information, the value of the help is much higher. In many companies, the applications don't have any help material other than what the company provides. For example, my colleague writes help for an application that manages a supply chain process. If a user doesn't find the answer in the help, will he or she start googling the question on the web? If so, there won't be any answers there.

The Every Page Is Page One philosophy assumes that help material is ubiquitous enough to have multiple competing sources on the web. If the help material is not all over the Internet, if the help material is more rare, the user may value the content more carefully, finding it to be more of an essential and unique guide.

However, even if the content is not ubiquitous on the web, the user may treat the content with the same low-cost behavior because the web has rewired our brains to function this way. Nicholas Carr in The Shallows explains that Google has rewired our brains with shorter attention spans and made us more prone to distractions. I'm sure it has affected the way we search for information as well.

A user who searches in a specialized application's help file and doesn't immediately see an array of sites providing similar answers may close the help file and try other approaches, such as asking a friend, calling support, using trial and error, or simply giving up. Google has trained our brains to hunt and peck from a variety of sources rather than plodding through one big thick manual.

Still, users who are forced to rely on one site for information will probably give it more than a one-page glance, for sure.

Conclusion

One larger question to ask is whether the behavior of someone using a help system is the same as the behavior of someone using the web. Does help stand in a unique genre of material, one that plays by different rules, even when it's on the web? Do users recognize official help material and dive more deeply into it, browsing and searching rather than immediately discarding it? Or do users act with the same behavior as they operate on the general web?

If there were different behaviors for different genres of content, I think the differences are evaporating. Everything is converging on the web, and we should design our help to fit more comfortably online.

Although I'm wrapping up this post (at at mere 3,700 words), this is not the end of the conversation. I'm going to record a podcast with Mark Baker, from Every Page Is Page One, later in the week. Stay tuned and check back later for a continuation of the discussion.

About Tom Johnson

I'm an API technical writer based in the Seattle area. On this blog, I write about topics related to technical writing and communication — such as software documentation, API documentation, AI, information architecture, content strategy, writing processes, plain language, tech comm careers, and more. Check out my API documentation course if you're looking for more info about documenting APIs. Or see my posts on AI and AI course section for more on the latest in AI and tech comm.

If you're a technical writer and want to keep on top of the latest trends in the tech comm, be sure to subscribe to email updates below. You can also learn more about me or contact me. Finally, note that the opinions I express on my blog are my own points of view, not that of my employer.